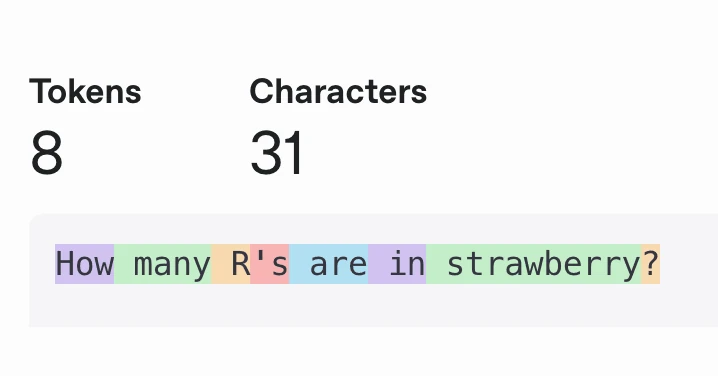

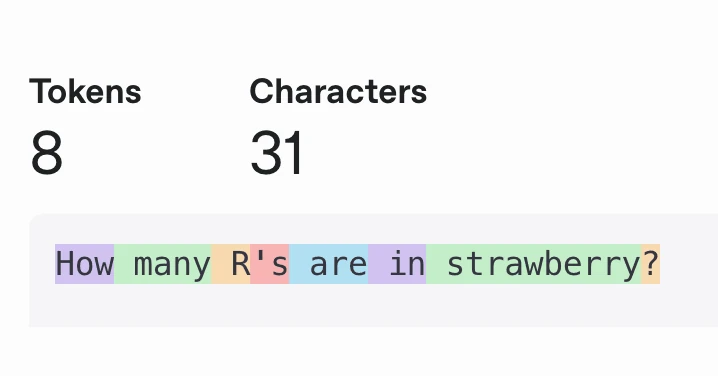

Current LLMs almost always process groups of characters, called tokens, instead of processing individual characters. They do this for performance reasons: Grouping 4 characters (on average) into a token reduces your effective context length by 4x.

Current LLMs almost always process groups of characters, called tokens, instead of processing individual characters. They do this for performance reasons: Grouping 4 characters (on average) into a token reduces your effective context length by 4x.

A few jobs ago, I worked at company that collected data from disparate sources, then processed and deduplicated it into spreadsheets for ingestion by the data science and customer support teams. Some common questions the engineering team got were:

To debug these problems, the process was to try to reverse engineer where the data came from, then try to guess which path that data took through the monolithic data processor.

This is the story of how we stopped doing that, and started storing references to all source data for every piece of output data.

In a recent post, Zvi described what he calls "The Most Forbidden Technique":

An AI produces a final output [X] via some method [M]. You can analyze [M] using technique [T], to learn what the AI is up to. You could train on that. Never do that.

You train on [X]. Only [X]. Never [M], never [T].

Why? Because [T] is how you figure out when the model is misbehaving.

If you train on [T], you are training the AI to obfuscate its thinking, and defeat [T]. You will rapidly lose your ability to know what is going on, in exactly the ways you most need to know what is going on.

The article specifically discusses this in relation to reasoning models and Chain of Thought (CoT): if we train a model not to admit to lying in its CoT, it might still lie in the CoT and just not tell us.

When you're subject to capital gains taxation, the government shares in some of the upside, but when you have capital losses, the government shares in the downside too. Because of this, the actual risk (and reward) of any given portfolio is lower than it seems. To counteract this, you should consider shifting your allocation toward riskier assets.

7 years ago (!) I wrote a post comparing ZIP and tar, plus gz or xz, and concluded that ZIP is ideal if you need to quickly access individual files in the compressed archive, and tar + compression (like tar + xz) is ideal if you need maximum compression. Since then, I discovered pixz, which seems to provide the best of both worlds: Maximum compression with indexing for quick seeking.