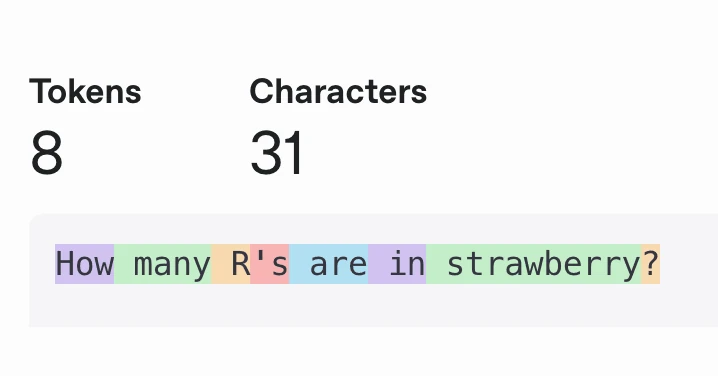

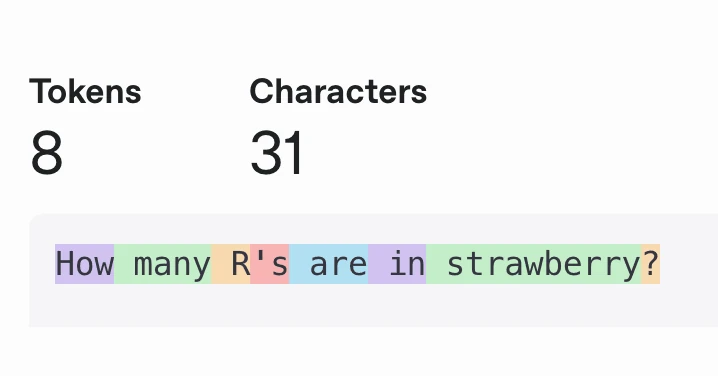

Current LLMs almost always process groups of characters, called tokens, instead of processing individual characters. They do this for performance reasons: Grouping 4 characters (on average) into a token reduces your effective context length by 4x.

Current LLMs almost always process groups of characters, called tokens, instead of processing individual characters. They do this for performance reasons: Grouping 4 characters (on average) into a token reduces your effective context length by 4x.